External ranking factors

Why inbound links to sites are taken into account

As you can see from the previous section, many factors influencing

the ranking process are under the control of webmasters. If these were

the only factors then it would be impossible for search engines to

distinguish between a genuine high-quality document and a page created

specifically to achieve high search ranking but containing no useful

information. For this reason, an analysis of inbound links to the page

being evaluated is one of the key factors in page ranking. This is the

only factor that is not controlled by the site owner.

It makes sense to assume that interesting sites will have more

inbound links. This is because owners of other sites on the Internet

will tend to have published links to a site if they think it is a

worthwhile resource. The search engine will use this inbound link

criterion in its evaluation of document significance.

Therefore, two main factors influence how pages are stored by the search engine and sorted for display in search results:

- Relevance, as described in the previous section on internal ranking factors.

- Number and quality of inbound links, also known as

link citation,

link popularity or

citation index. This will be described in the next section.

Link importance (citation index, link popularity)

You can easily see that simply counting the number of inbound links

does not give us enough information to evaluate a site. It is obvious

that a link from www.microsoft.com should mean much more than a link

from some homepage like www.hostingcompany.com/~myhomepage.html. You

have to take into account

link importance as well as number of links.

Search engines use the notion of citation index to evaluate the

number and quality of inbound links to a site. Citation index is a

numeric estimate of the popularity of a resource expressed as an

absolute value representing page importance. Each search engine uses its

own algorithms to estimate a page citation index. As a rule, these

values are not published.

As well as the absolute citation index value, a scaled citation index

is sometimes used. This relative value indicates the popularity of a

page relative to the popularity of other pages on the Internet. You will

find a detailed description of citation indexes and the algorithms used

for their estimation in the next sections.

Link text (anchor text)

The link text of any inbound site link is vitally important in search

result ranking. The anchor (or link) text is the text between the HTML

tags «A» and «/A» and is displayed as the text that you click in a

browser to go to a new page. If the link text contains appropriate

keywords, the search engine regards it as an additional and highly

significant recommendation that the site actually contains valuable

information relevant to the search query.

Relevance of referring pages

As well as link text, search engines also take into account the overall information content of each referring page.

Example: Suppose we are using seo to promote a car sales resource.

In this case a link from a site about car repairs will have much more

importance that a similar link from a site about gardening. The first

link is published on a resource having a similar topic so it will be

more important for search engines.

Google PageRank – theoretical basics

The Google company was the first company to patent the system of

taking into account inbound links. The algorithm was named PageRank. In

this section, we will describe this algorithm and how it can influence

search result ranking.

PageRank is estimated separately for each web page and is determined

by the PageRank (citation) of other pages referring to it. It is a kind

of “virtuous circle.” The main task is to find the criterion that

determines page importance. In the case of PageRank, it is the

possible frequency of visits to a page.

I shall now describe how user’s behavior when following links to surf

the network is modeled. It is assumed that the user starts viewing

sites from some random page. Then he or she follows links to other web

resources. There is always a possibility that the user may leave a site

without following any outbound link and start viewing documents from a

random page. The PageRank algorithm estimates the probability of this

event as 0.15 at each step. The probability that our user continues

surfing by following one of the links available on the current page is

therefore 0.85, assuming that all links are equal in this case. If he or

she continues surfing indefinitely, popular pages will be visited many

more times than the less popular pages.

The PageRank of a specified web page is thus defined as

the probability that a user may visit the web page.

It follows that, the sum of probabilities for all existing web pages is

exactly one because the user is assumed to be visiting at least one

Internet page at any given moment.

Since it is not always convenient to work with these probabilities

the PageRank can be mathematically transformed into a more easily

understood number for viewing. For instance, we are used to seeing a

PageRank number between zero and ten on the Google Toolbar.

According to the ranking model described above:

- Each page on the Net (even if there are no inbound links to it)

initially has a PageRank greater than zero, although it will be very

small. There is a tiny chance that a user may accidentally navigate to

it.

- Each page that has outbound links distributes part of its PageRank

to the referenced page. The PageRank contributed to these linked-to

pages is inversely proportional to the total number of links on the

linked-from page – the more links it has, the lower the PageRank

allocated to each linked-to page.

- PageRank A “damping factor” is applied to this process so that the

total distributed page rank is reduced by 15%. This is equivalent to the

probability, described above, that the user will not visit any of the

linked-to pages but will navigate to an unrelated website.

Let us now see how this PageRank process might influence the process

of ranking search results. We say “might” because the pure PageRank

algorithm just described has not been used in the Google algorithm for

quite a while now. We will discuss a more current and sophisticated

version shortly. There is nothing difficult about the PageRank influence

– after the search engine finds a number of relevant documents (using

internal text criteria), they can be sorted according to the PageRank

since it would be logical to suppose that a document having a larger

number of high-quality inbound links contains the most valuable

information.

Thus, the PageRank algorithm "pushes up" those documents that are most popular outside the search engine as well.

Google PageRank – practical use

Currently, PageRank is not used directly in the Google algorithm.

This is to be expected since pure PageRank characterizes only the number

and the quality of inbound links to a site, but it completely ignores

the text of links and the information content of referring pages. These

factors are important in page ranking and they are taken into account in

later versions of the algorithm. It is thought that the current Google

ranking algorithm ranks pages according to thematic PageRank. In other

words, it emphasizes the importance of links from pages with content

related by similar topics or themes. The exact details of this algorithm

are known only to Google developers.

You can determine the PageRank value for any web page with the help

of the Google ToolBar that shows a PageRank value within the range from 0

to 10. It should be noted that the Google ToolBar does not show the

exact PageRank probability value, but the PageRank range a particular

site is in. Each range (from 0 to 10) is defined according to a

logarithmic scale.

Here is an example: each page has a real PageRank value known only

to Google. To derive a displayed PageRank range for their ToolBar, they

use a logarithmic scale as shown in this table

Real PR ToolBar PR

1-10 1

10-100 2

100-1000 3

1000-10.000 4

Etc.

This shows that the PageRank ranges displayed on the Google ToolBar

are not all equal. It is easy, for example, to increase PageRank from

one to two, while it is much more difficult to increase it from six to

seven.

In practice, PageRank is mainly used for two purposes:

1. Quick check of the sites popularity. PageRank does not give exact

information about referring pages, but it allows you to quickly and

easily get a feel for the sites popularity level and to follow trends

that may result from your seo work. You can use the following “Rule of

thumb” measures for English language sites: PR 4-5 is typical for most

sites with average popularity. PR 6 indicates a very popular site while

PR 7 is almost unreachable for a regular webmaster. You should

congratulate yourself if you manage to achieve it. PR 8, 9, 10 can only

be achieved by the sites of large companies such as Microsoft, Google,

etc. PageRank is also useful when exchanging links and in similar

situations. You can compare the quality of the pages offered in the

exchange with pages from your own site to decide if the exchange should

be accepted.

2. Evaluation of the competitiveness level for a search query is a

vital part of seo work. Although PageRank is not used directly in the

ranking algorithms, it allows you to indirectly evaluate relative site

competitiveness for a particular query. For example, if the search

engine displays sites with PageRank 6-7 in the top search results, a

site with PageRank 4 is not likely to get to the top of the results list

using the same search query.

It is important to recognize that the PageRank values displayed on

the Google ToolBar are recalculated only occasionally (every few months)

so the Google ToolBar displays somewhat outdated information. This

means that the Google search engine tracks changes in inbound links much

faster than these changes are reflected on the Google ToolBar.

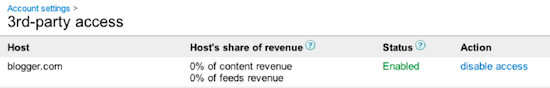

The error message above can occur when 3rd-party Access has been

disabled in your AdSense account. To re-enable access to your AdSense

account by Blogger, please log in to your AdSense account, and click the

Account Settings link. You'll see an enable access

link next to blogger.com, you must click this link in order to create

ads with Blogger tools. You will then be able to log back into

blogger.com and continue setting up AdSense from the Earnings tab.

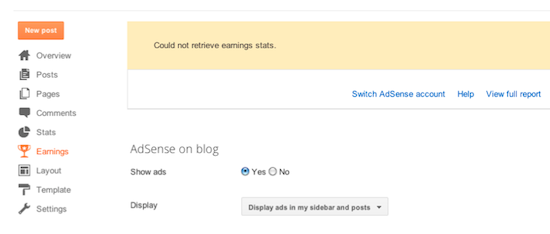

The error message above can occur when 3rd-party Access has been

disabled in your AdSense account. To re-enable access to your AdSense

account by Blogger, please log in to your AdSense account, and click the

Account Settings link. You'll see an enable access

link next to blogger.com, you must click this link in order to create

ads with Blogger tools. You will then be able to log back into

blogger.com and continue setting up AdSense from the Earnings tab.

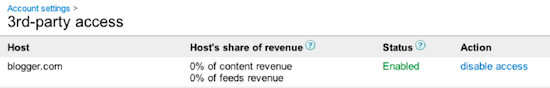

The error message above can occur when 3rd-party Access has been

disabled in your AdSense account. To re-enable access to your AdSense

account by Blogger, please log in to your AdSense account, and click the

Account Settings link. You'll see an enable access

link next to blogger.com, you must click this link in order to create

ads with Blogger tools. You will then be able to log back into

blogger.com and continue setting up AdSense from the Earnings tab.

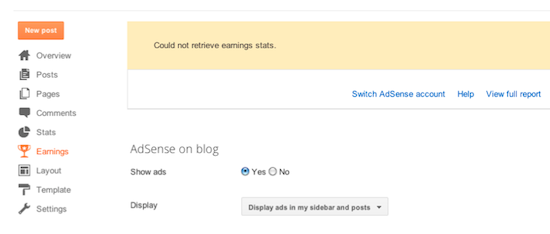

The error message above can occur when 3rd-party Access has been

disabled in your AdSense account. To re-enable access to your AdSense

account by Blogger, please log in to your AdSense account, and click the

Account Settings link. You'll see an enable access

link next to blogger.com, you must click this link in order to create

ads with Blogger tools. You will then be able to log back into

blogger.com and continue setting up AdSense from the Earnings tab.